Metropolia and the University of Eastern Finland jointly organized the IWCLUL workshop (International Workshop on Computational Linguistics for Uralic Languages), which brought together researchers of Finno-Ugric languages from across Europe. The workshop was held as part of the international ACL community and provided an up-to-date overview of language technology research on Uralic languages, especially in the era of artificial intelligence and large language models.

A broad range of Metropolia’s research

Metropolia’s AI research was exceptionally well represented at the workshop. Four full papers produced at Metropolia were accepted for the workshop, addressing both pedagogical and language technology topics from multiple perspectives.

The paper From NLG Evaluation to Modern Student Assessment in the Era of ChatGPT: The Great Misalignment Problem and Pedagogical Multi-Factor Assessment (P-MFA) examined the impact of artificial intelligence on assessment practices in higher education. The study highlighted the so-called Great Misalignment Problem, where assessment no longer measures what it is intended to measure when students can produce high-quality outputs using generative language models. The paper introduced a new Pedagogical Multi-Factor Assessment (P-MFA) model, which emphasizes the learning process, diverse forms of evidence, and pedagogical transparency rather than single final products.

In a paper co-authored with Waseda University, Benchmarking Finnish Lemmatizers across Historical and Contemporary Texts evaluated Finnish lemmatization tools on both contemporary and historical data. The study made use of the Project Gutenberg corpus and, for the first time, included the Trankit tool in a comparison of Finnish lemmatization. A key finding was that Murre preprocessing significantly improves lemmatization results for dialectal and historical texts, while its impact on modern Finnish is minimal.

A timely application of artificial intelligence to foresight was presented in the paper ORACLE: Time-Dependent Recursive Summary Graphs for Foresight on News Data Using LLMs. The study developed a new method in which temporally evolving recursive summary graphs are constructed from news data using large language models. The ORACLE approach enables the analysis of developments and emerging trends in news content by combining temporal structure with language model–based summarization.

The fourth paper, co-authored with the University of Helsinki, Evaluating OpenAI GPT Models for Translation of Endangered Uralic Languages: A Comparison of Reasoning and Non-Reasoning Architectures, focused on machine translation for endangered Uralic languages. The study compared reasoning-based and non-reasoning architectures of OpenAI’s GPT models and analyzed their performance on low-resource languages. The results provide valuable insights into which types of language model solutions are best suited for supporting small and endangered languages.

Metropolia’s lightning talks: agile openings on topical themes

Metropolia’s visibility at the IWCLUL workshop was not limited to full research papers but extended strongly to the lightning talks as well. The lightning talks provided a concise yet substantively rich overview of rapidly developing research directions that are central to language technology for Uralic and other small languages.

The lightning talk UralicMCP: Turning LLMs into Experts in Endangered Languages with MCP presented a new Model Context Protocol (MCP)–based extension to the UralicNLP library. The core idea of UralicMCP is to connect large language models with rule-based language technology tools such as a morphological analyzer, inflector, lemmatizer, and dictionaries. This makes it possible for language models to perform NLP tasks even in endangered Uralic languages for which they have little to no training data. Experiments presented in the lightning talk showed that, with MCP, language models can succeed in tasks that would otherwise be impossible for them.

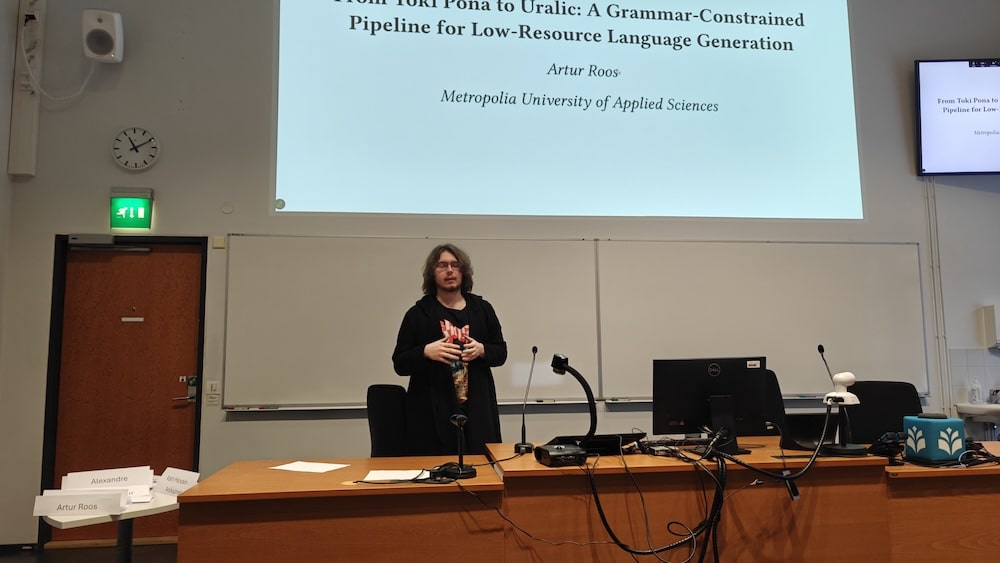

The second lightning talk, From Toki Pona to Uralic: A Grammar-Constrained Pipeline for Low-Resource Language Generation, addressed a methodological approach to training language models for low-resource languages. The work used an extremely controlled language such as Toki Pona as a testbed for grammatically guided synthetic data generation. The goal was not Toki Pona itself, but a scalable method that can be transferred to morphologically rich Uralic languages. The lightning talk highlighted how explicit grammatical constraints and validated synthetic data can compensate for the lack of large datasets.

The lightning talk Did Karelian Survive the Year? A Small Data Update provided an up-to-date snapshot of the digital vitality of the Karelian language. The talk presented a lightweight yet repeatable data collection process used to analyze Karelian-language online content, particularly in news and article texts. The results showed that Karelian is actively produced online, especially in short news formats, and that even a small but regularly updated dataset can provide meaningful insights into the current state of an endangered language.

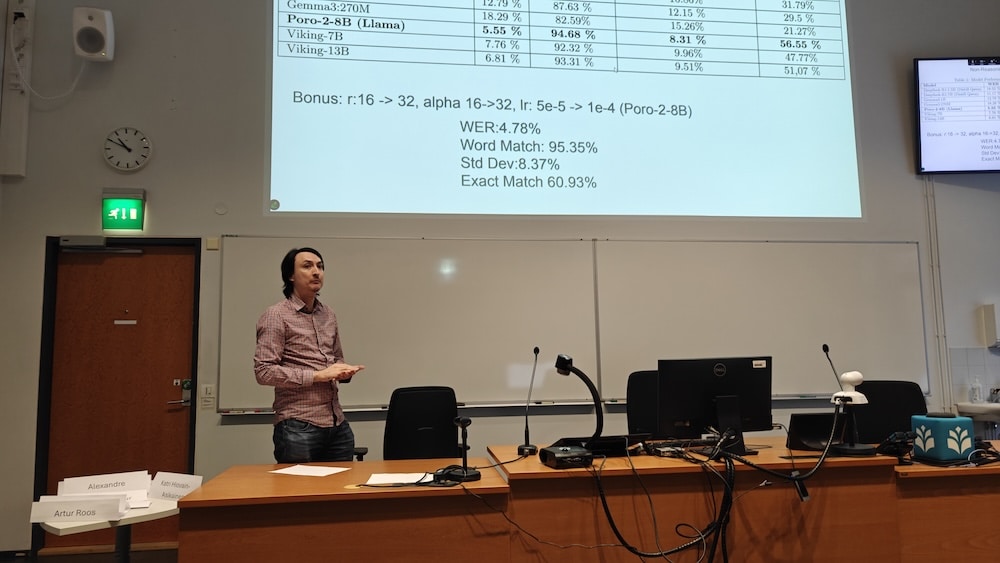

The fourth Metropolia lightning talk, Evaluating Finnish Dialect Normalization in GPT Models with and without Reasoning, focused on dialect normalization of Finnish using language models. The study compared traditionally fine-tuned GPT-style models with models explicitly equipped with reasoning (chain-of-thought). The results showed that strong pretraining in the Finnish language is more crucial than explicit reasoning, and that reasoning-based fine-tuning can even degrade normalization performance in this task. The lightning talk highlighted important insights into when and how reasoning capabilities should be leveraged in language technology applications.

From research to practice: AI in support of small languages

The IWCLUL workshop highlighted how Metropolia’s AI research brings together theoretical linguistics, practical language technology, and societal impact. Both the full research papers and the lightning contributions demonstrated that large language models are not viewed at Metropolia as standalone, general-purpose solutions, but rather as tools that can be guided, constrained, and complemented with linguistic expertise.

The common denominator across Metropolia’s presentations was the reality of endangered languages: limited datasets, rich morphology, and the need for transparent and maintainable solutions. Whether the focus was on rethinking assessment in education, translation of Uralic languages, the digital vitality of Karelian, or normalization of dialectal Finnish, the research emphasized approaches that work even when ready-made data or perfect models are not available.

The workshop reinforced Metropolia’s role in the international language technology community as an actor that brings together artificial intelligence, open-source development, and the needs of language communities. At the same time, it demonstrated that research on small languages is not a side track of AI development, but one of its most important testbeds: it is precisely there that the assumptions, limitations, and design choices underlying language models are forced into the open.

Comments

No comments