How Metropolia software students worked with hardware and AI and made it to the top 5 of Finland’s largest defense hackathon

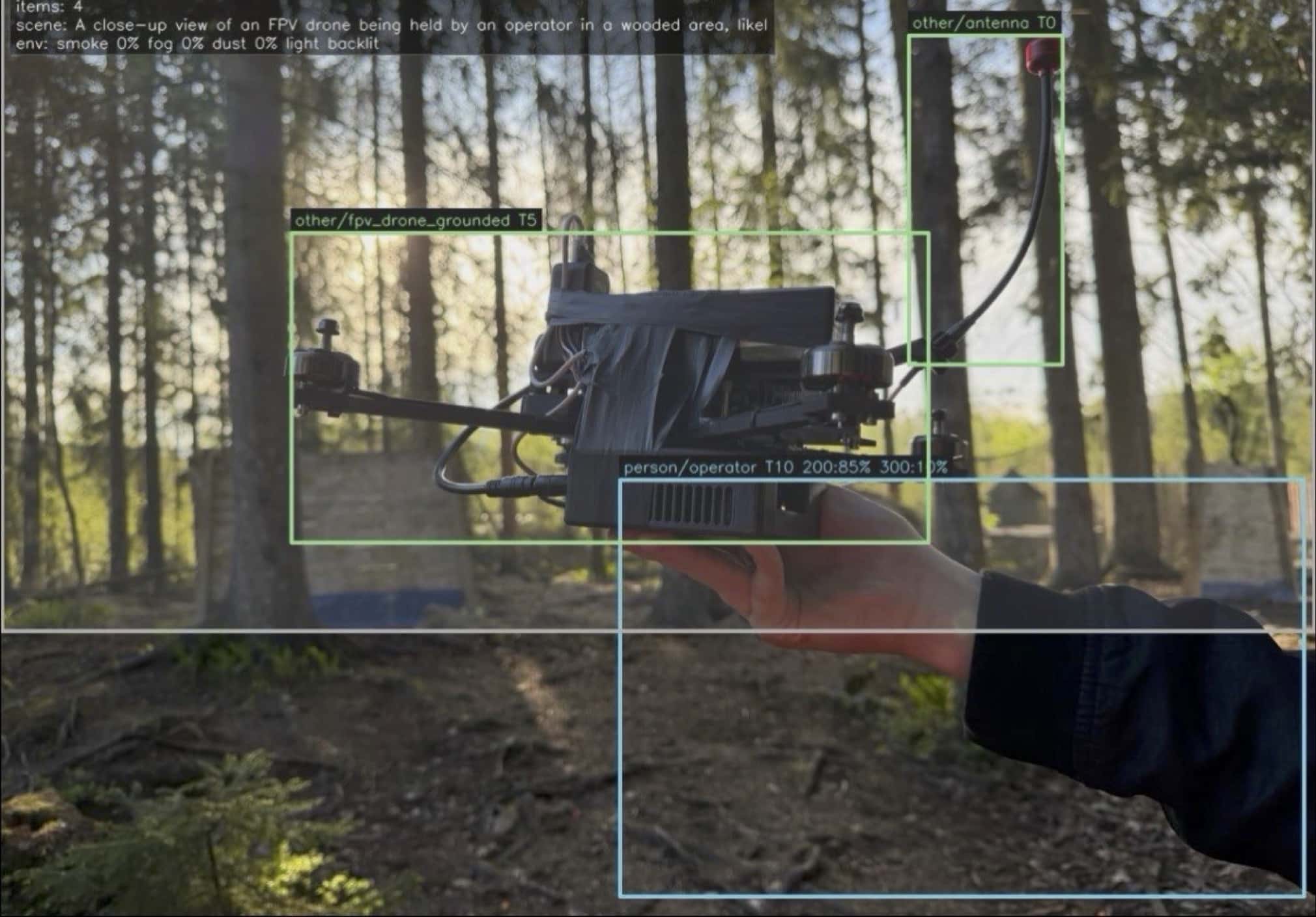

On the battlefield, drones act as the eyes of soldiers, seeing further, and as their brains, thinking faster than human operators can react. FPV (first-person view) does not allow for a calm second pass of the scene. They have literally milliseconds to make the best possible decision: whether to keep observing, reacquire a target or commit to a tactic. The cost of misreading the situation is high – a failed mission. That pressure is why perception alone is not enough. Seeing the target is not the same as understanding its environment under noise, obstruction, and uncertainty. In real world operations, the system must process sensor data on board and act in a way that is both explainable and safe, without relying on an operator every frame. At the Aalto Defense × Junction Hackathon in May 2026, a group of Metropolia students, Yehor Tereshchenko, Nadiia Haidash and Diana Antoniuk, took on exactly that problem: building a real-time perception and reasoning system for autonomous drones using edge compute and sensor data. Rather than creating another demo that only draws YOLO bounding boxes, the goal for the weekend was to develop mission-level behavior, including stable observation, cautious orbit-style tactics and searching when confidence drops. Our journey Hackathon time is not calendar time. A task that looks like “two hours” becomes a day; a day becomes a night on the floor. This is how our weekend actually went. The team discussing the project Friday -“It’s just wiring.” As with every hardware project, the start was optimistic. The task was to connect the NVIDIA Jetson Orin Nano Super to the drone through a maze of connectors and adapters. In parallel, the software development began with the repository structure, the first scripts, and the idea that we would have a camera and something to detect something by Saturday night. Designing a path on the floor Friday night to Saturday 02:00 and 04:00 -runs to the store. When the bench is missing that one connector that makes the story possible, there's no time for debate. You go to the store. At 2 a.m. and again at 4 a.m. on a mission for parts is a hackathon genre of its own. Everything was kept on the floor and we kept going. Sleep was a rumour. First trip to the store The calm walk to the store 2.0 Saturday morning - the battery that refused to work. Jetson would not reliably power up from the battery path we had engineered. A whole day disappeared into rethinking the power tree, soldering, an ammeter, a step-up module, and the occasional smell of something that was not supposed to get that hot. Fingers included. The solution was almost insultingly simple: stop fighting the universe by adding unnecessary conversion stages. That lesson alone made the Saturday worthwhile. Creative chaos Saturday day andnight - software catches up. Camera on Jetson. Detection coming alive - first shaky, then repeatable. Simple object detection became a pipeline; the pipeline became telemetry; telemetry became something we could show without apologizing. Object detection with first versions of software Sunday, 05:00 -leave for the forest. After working through Saturday night, we left at around 5 a.m. to film a demo video in real outdoor conditions - wind, background noise and a person walking through the frame like a real target. It was cold and early, but absolutely worth it. Filming demo video in real outdoor conditions Sunday morning - “this is what we built.” Forest footage, moving targets, the stack still running: see the scene, update belief, show phase and macro on the dashboard. Real conditions do not care about architecture diagram. On Sunday,one hour before the deadline. Of course, the polished submission layer came last. In the final hour, we pulled together the application materials - the story, the screenshots and the pitch deck - because builders build first and explain second. Sunday- the pitch. Minutes before going on stage, something broke. Again. You don't cancel; you think carefully, fix what you can and demonstrate what works. We gave a live pitch to everyone at the event, showing the detection, mission state and the loop updating in real time. The team pitching It was a really rewarding experience. The team was one of the top five teams competing for acceleration. Presenting in front of the entire hackathon audience did not feel like a consolation prize. For a project developed over the weekend, it proved that the idea could withstand the challenges of hardware, deadlines and public presentation. Although we didn't win the overall prize, we received feedback saying that we had exceeded the scope of the challenge. We can't call it a loss - it was a win for us! Hackathons teach you two things at once: ship the demo and learn how big the idea wants to become. What was actually built Our weekend build focused on vision-follow and mission tactics on edge hardware demonstrated in simulation and bench conditions with live telemetry, as a foundation for higher-stakes use cases. Happily holding a drone, which survived a pitch Perception transforms the camera stream into structured evidence. Using an onboard camera, class detection, person-centric filtering and AI-based computer vision, the system identifies and tracks relevant targets over time. These outputs are packaged as observations: Is someone present? Is there motion, occlusion or instability? This layer answers the question, “What does the machine think it sees right now?” System detecting Reasoning sits on top of this. A decision engine evaluates those observations, then selects a mission intent and high-level modes, such as holding and observing, following cautiously, or resuming search when belief weakens. This is intentionally above low-level motor commands. The flightcritical loop stays deterministic and fast; optional slower AI narration runs beside it, not inside it. See. Decide. Commit. Repeat. The system recognises and interprets pixels as evidence. It decides on a tactic within constraints. It then commits, with the control and executor translating intent into safe cues. Then it repeats this process for every frame. LLM narration runs on a separate slow path: a background thread snapshots telemetry every ~6 seconds and calls Ollama (the ~3B class model). The prompt is a compressed slice of mission state and loop health. The reply is advisory only; it never blocks the hot path. If Ollama is unreachable, the flight loop and planner keep going; the UI simply shows that the narrative channel failed. Nano-class models for fast object detection were the spine: low latency on Jetson, person-first for vision-follow, with room to add classes and heads. Pose and “vital” structure were on the roadmap - pose estimation and finer body structure to move from a box on a human to a model of where the system is looking. Object detection We designed toward a richer battlespace picture: Ammunition and gear cues: helmets, armor, ballistic goggles, ear protection; weapon families (rifle, shotgun, pistol, EM weapons, drone counter-UAS nets, and similar categories as training labels). Obscurants and cover: structured cover, masking nets, smoke/fog/haze—detection under degraded visibility, not only clear sky demos. Not just “human”: separating soldiers, civilians, operators, volunteers, press; status bands (alive, lightly injured, KIA-class outcomes) and risk of escalation along a timeline—ethically fraught, technically interesting, and exactly the kind of problem defense AI forces you to confront carefully. The system tracking a target in real outdoor conditions Under the hood, AeroRozum runs on an NVIDIA Jetson Orin Nano Super devkit, wired to a drone stack and USB cameras. Connecting it to a drone stack and a moving target scenario was more complicated than the architectural diagrams. The hardware stack The concept was developed of an on-device VLA-style vision-language model, rather than a cloud-only Copilot for drones. The concept is that a fast detection pipeline feeds structured world state, while a mission planner LLM retains contextual information about the payload, mission intent, surroundings and recent history, ensuring that recommendations remain grounded in what the edge stack can actually perceive. This combination - milliseconds for perception, seconds for narration/planning and deterministic control in between - is the architectural design. The team working Lessons learnt We arrived as students, fuelled by previous courses, caffeine and the kind of teamwork that only comes when time is of the essence. When we think “this will take an hour”, we should mentally translate that to “maybe a day”, and that is normal. That power electronics can humble you faster than coding. That debugging for 20 minutes before a demo is not a failure - it's part of the job. Redoing a drone power path while the judges are scheduling the next team is still an achievement if you walk on stage and demonstrate your progress. That AI on the edge is not just one model - it's a combination of detection and belief, macros, slow language and logs, and the art lies in keeping those elements separate and together at the same time. We are tired, we are proud, and we are not finished yet! From here, the path involves field hardening, cleaner camera setup on Jetson, tighter progression from simulation towards cautious hardware integration, and spending more time outside with the same approach: measure, explain, repeat. If we want autonomy to feel understandable rather than mystical, then we must treat every frame as a decision. We built AeroRozum to make those decisions visible. The Team The contents of this blog reflect the collective effort of Metropolia students Yehor Tereshchenko, Nadiia Haidash, Diana Antoniuk, participating in the hackathon. With gratitude to Metropolia for the foundation that let students attempt something this ambitious; thanks to Aalto Defense, Junction, partners, mentors, and every team that shared the floor with us. Attempt to setup servo drop mechanism at 3 am, was not included in the final setup

Artificial intelligence requires continuous renewal of one’s own thinking

Did you try using AI, but it didn’t produce the desired result? Or perhaps you ended up spending time fixing the mistakes made by the AI. It may be that the AI you used isn’t yet good enough to solve your problem, but it’s also very likely that you used it incorrectly, relying on old ways of working. AI works better when you dare to question your own approach: do things always have to be done this way? Email takes twice as much working time compared to fax Let’s imagine a world where an employee is used to typing a document on a typewriter and then faxing it to the recipient. The process is simple: once the document is ready, it’s placed in the fax machine and, with the push of a button, it’s sent. When the employee is told that they should use email instead of fax, they become frustrated. Now the work takes twice as long! First, the document has to be typed on a typewriter, and since it can’t be fed directly into a computer, it has to be retyped using a word processor before it can be sent by email. Surely working time could be used more efficiently? Email was supposed to make everyday life easier! Only when the employee questions their way of working and changes it do they gain the real benefits of email. Why does the document have to be written first on a typewriter? Could it instead be written directly on a computer? Is it even necessary for a paper copy of the document to exist somewhere in a folder? Many clever people can come up with good reasons to cling to old ways of working. For example, they might argue that writing on a computer weakens literacy and accuracy, because spelling errors are automatically highlighted and mistakes are too easy to fix—so you can write carelessly, whereas a typewriter requires real professional skill, and so on. But these are just excuses. The world is changing, and we must change with it. AI shouldn't be used because... In higher education, the same arguments against using AI tend to come up repeatedly. Students will never learn the conventions of scientific writing if they use AI to write everything. To this, I would like to pose a question: what are the conventions of scientific writing actually needed for? In my opinion, no longer for anything at all. Let AI do what it does well, and leave to humans what AI cannot achieve. I know many academically very talented and intelligent people who simply are not good writers. Writing skill and the ability to conduct research and produce new knowledge do not go hand in hand. It is actually unfair that academic success has so far been measured by the ability to arrange words into an eloquent form. Writing is thinking, and if you don’t do it, you won’t learn to think. This argument makes me sad. I feel sorry for those who cannot think without writing. You can think and reflect better without pen and paper, if you give it time. For example, a one-hour walk outside gets your thoughts flowing in a completely different way than staring at a blank page. And going for a walk is something AI will never do on anyone’s behalf. AI consumes a lot of electricity and harms the environment. If someone wants to rely on this argument, I hope they also refrain from using social media, watching videos, or listening to music via streaming. Hopefully, they also don’t play video games or use a computer or phone for anything beyond absolute necessities. Of course, AI uses electricity—but so does everything else. If this argument is used to avoid only AI, while continuing to use all other data center–driven services, it sounds somewhat hypocritical. “AI doesn't know how to write” Sometimes I wonder how people have the patience to use AI for brainstorming ideas and outlining structure, only to then become frustrated when the AI can’t actually write the article. This is an example of how old ways of working prevent the effective use of AI. Many people cling to the idea that text is somehow the ultimate end goal of writing. In my view, the text itself is secondary—unless we are talking about novels and poetry. More important than the text is conveying an idea— yes, an idea that a person themselves must have. I can get perfectly good articles out of AI when I provide it with the desired structure and clearly explain the main idea of the article, along with the key points of thinking and argumentation under the headings. And just like that, the AI produces exactly the kind of article I wanted. If something is off, I simply ask the AI to fix it. I don’t start manually editing anything longer than individual sentences. Of course, it may be that the AI’s text doesn’t fully please you or doesn’t feel like your own. What matters more, however, is asking yourself: does this say what I wanted to say? ChatGPT will probably never write in exactly the same way as I do, but that doesn’t matter. The style may feel unfamiliar, but if the content reflects my thinking, I’m satisfied. I have abandoned the old-fashioned idea of ownership of the text. For me, it is enough that I own the idea behind it. What does the future of work life require? Now, and increasingly in the future, we must break away from old practices. Nothing should be done just because it has to be done, or because it’s supposedly necessary, and certainly not because ‘it might be good to do.’ Why does it have to be done? Why is it necessary? What are we really aiming for? Give AI the intellectual core of the work—the part that requires expertise—and let AI handle everything else around it. This requires creativity and self-criticism. Unfortunately, our education system does not support the development of creativity in all fields. This is a shame, because continuously adapting one’s way of working as AI evolves is not possible without creativity. I challenge you, dear reader, to reflect on how you could do your work differently. Start by dismantling the ‘musts’ and question why you do what you do in the first place. If this text provoked a reaction in you, I hope you reflect carefully on where that reaction comes from. In the end, the question is which side of history you want to be on after the AI revolution.

Metropolia’s AI research strongly featured in an international workshop

Metropolia and the University of Eastern Finland jointly organized the IWCLUL workshop (International Workshop on Computational Linguistics for Uralic Languages), which brought together researchers of Finno-Ugric languages from across Europe. The workshop was held as part of the international ACL community and provided an up-to-date overview of language technology research on Uralic languages, especially in the era of artificial intelligence and large language models. A broad range of Metropolia’s research Metropolia’s AI research was exceptionally well represented at the workshop. Four full papers produced at Metropolia were accepted for the workshop, addressing both pedagogical and language technology topics from multiple perspectives. The paper From NLG Evaluation to Modern Student Assessment in the Era of ChatGPT: The Great Misalignment Problem and Pedagogical Multi-Factor Assessment (P-MFA) examined the impact of artificial intelligence on assessment practices in higher education. The study highlighted the so-called Great Misalignment Problem, where assessment no longer measures what it is intended to measure when students can produce high-quality outputs using generative language models. The paper introduced a new Pedagogical Multi-Factor Assessment (P-MFA) model, which emphasizes the learning process, diverse forms of evidence, and pedagogical transparency rather than single final products. In a paper co-authored with Waseda University, Benchmarking Finnish Lemmatizers across Historical and Contemporary Texts evaluated Finnish lemmatization tools on both contemporary and historical data. The study made use of the Project Gutenberg corpus and, for the first time, included the Trankit tool in a comparison of Finnish lemmatization. A key finding was that Murre preprocessing significantly improves lemmatization results for dialectal and historical texts, while its impact on modern Finnish is minimal. In the image, Aki Morooka is talking about normalization experiments. A timely application of artificial intelligence to foresight was presented in the paper ORACLE: Time-Dependent Recursive Summary Graphs for Foresight on News Data Using LLMs. The study developed a new method in which temporally evolving recursive summary graphs are constructed from news data using large language models. The ORACLE approach enables the analysis of developments and emerging trends in news content by combining temporal structure with language model–based summarization. The fourth paper, co-authored with the University of Helsinki, Evaluating OpenAI GPT Models for Translation of Endangered Uralic Languages: A Comparison of Reasoning and Non-Reasoning Architectures, focused on machine translation for endangered Uralic languages. The study compared reasoning-based and non-reasoning architectures of OpenAI’s GPT models and analyzed their performance on low-resource languages. The results provide valuable insights into which types of language model solutions are best suited for supporting small and endangered languages. Metropolia’s lightning talks: agile openings on topical themes Metropolia’s visibility at the IWCLUL workshop was not limited to full research papers but extended strongly to the lightning talks as well. The lightning talks provided a concise yet substantively rich overview of rapidly developing research directions that are central to language technology for Uralic and other small languages. The lightning talk UralicMCP: Turning LLMs into Experts in Endangered Languages with MCP presented a new Model Context Protocol (MCP)–based extension to the UralicNLP library. The core idea of UralicMCP is to connect large language models with rule-based language technology tools such as a morphological analyzer, inflector, lemmatizer, and dictionaries. This makes it possible for language models to perform NLP tasks even in endangered Uralic languages for which they have little to no training data. Experiments presented in the lightning talk showed that, with MCP, language models can succeed in tasks that would otherwise be impossible for them. Lev Kharlashkin addressed the current state of the Karelian language. The second lightning talk, From Toki Pona to Uralic: A Grammar-Constrained Pipeline for Low-Resource Language Generation, addressed a methodological approach to training language models for low-resource languages. The work used an extremely controlled language such as Toki Pona as a testbed for grammatically guided synthetic data generation. The goal was not Toki Pona itself, but a scalable method that can be transferred to morphologically rich Uralic languages. The lightning talk highlighted how explicit grammatical constraints and validated synthetic data can compensate for the lack of large datasets. The lightning talk Did Karelian Survive the Year? A Small Data Update provided an up-to-date snapshot of the digital vitality of the Karelian language. The talk presented a lightweight yet repeatable data collection process used to analyze Karelian-language online content, particularly in news and article texts. The results showed that Karelian is actively produced online, especially in short news formats, and that even a small but regularly updated dataset can provide meaningful insights into the current state of an endangered language. The fourth Metropolia lightning talk, Evaluating Finnish Dialect Normalization in GPT Models with and without Reasoning, focused on dialect normalization of Finnish using language models. The study compared traditionally fine-tuned GPT-style models with models explicitly equipped with reasoning (chain-of-thought). The results showed that strong pretraining in the Finnish language is more crucial than explicit reasoning, and that reasoning-based fine-tuning can even degrade normalization performance in this task. The lightning talk highlighted important insights into when and how reasoning capabilities should be leveraged in language technology applications. Artur Roos explained what Uralic languages can learn from synthetic languages. From research to practice: AI in support of small languages The IWCLUL workshop highlighted how Metropolia’s AI research brings together theoretical linguistics, practical language technology, and societal impact. Both the full research papers and the lightning contributions demonstrated that large language models are not viewed at Metropolia as standalone, general-purpose solutions, but rather as tools that can be guided, constrained, and complemented with linguistic expertise. The common denominator across Metropolia’s presentations was the reality of endangered languages: limited datasets, rich morphology, and the need for transparent and maintainable solutions. Whether the focus was on rethinking assessment in education, translation of Uralic languages, the digital vitality of Karelian, or normalization of dialectal Finnish, the research emphasized approaches that work even when ready-made data or perfect models are not available. The workshop reinforced Metropolia’s role in the international language technology community as an actor that brings together artificial intelligence, open-source development, and the needs of language communities. At the same time, it demonstrated that research on small languages is not a side track of AI development, but one of its most important testbeds: it is precisely there that the assumptions, limitations, and design choices underlying language models are forced into the open.